Pre-training and post-training survey questions: all you need to know

Before the training starts. After it ends. Thirty days later, when the dust has settled and real life has reasserted itself. Each of these moments is an opportunity to gather important information about your participants, about your design, and ultimately about whether what you did actually mattered.

Surveys are one of the most accessible tools a trainer or L&D professional has to make a single session into a longer, more impactful collaborative process. By starting to ask questions before the workshop you can begin to reach out to participants. They learn a bit about you, you learn a bit about them, and can tailor your work accordingly.

As for collecting data after the session, it’s a no-brainer: that is what you most need to evaluate how things went, both in terms of facilitator performance and in terms of longer-term impact. And yet, in the rush to prepare content and logistics, surveys often become an afterthought: a five-question form fired off at the end of the session, if they happen at all.

This article is a practical resource for doing surveys better. You’ll find a question bank you can copy and adapt for pre-training surveys, post-training surveys, and optional 30-day follow-ups. We’ll also look at how to keep your questions sharp, inclusive, and actually useful, and how to fit all of this into your facilitation workflow without adding a mountain of admin.

Here is what you’ll find inside:

What is a pre-training survey?

A pre-training survey is a short questionnaire sent to participants before a training session or program begins. Its main purpose is to help the facilitator, trainer or instructor understand who is in the room: their experience level, their expectations, what they already know, what they’re hoping to get out of the session, and any contextual factors (role, team, day-to-day challenges) that might shape the design.

Done well, a pre-training survey is less a form and more a conversation starter. It signals to participants that their perspective matters before they’ve even stepped into the room.

When to send it: ideally about one or two weeks before the session. The whole point of a pre-training survey is that you can actually do something with the responses: adjust your design, reorder priorities, flag a concern to the client.

If it goes out two days before, you lose that window. If you are planning ahead and send it out too early (say a month before the session) it will no longer serve the extra function of preparing participants with guided reflections.

If you’re designing a longer program and need to go deeper into organizational needs before you even get to survey design, a Training Needs Assessment is the natural starting point. You’ll find a ready-made TNA template in SessionLab to make that process faster too.

Image credit Fabio Riva, at the Facilita2026 gathering in Italy

What is a post-training survey?

A post-training survey is sent after a session or program ends. It collects participants’ reactions, self-assessed learning, and intentions to apply what they have learned or decided. At its most basic, it’s a satisfaction check. At its most useful, it’s the beginning of an impact conversation.

The distinction matters. A participant saying “I really enjoyed this” is useful feedback for the trainer. A participant saying “I intend to use this approach in my next team meeting, but I’m worried my manager won’t support it” is useful information for the organization.

Meaningful evaluation is only possible when you know where you are going. If you don’t know where you are headed, how can you measure if you actually reached your desired destination?

Melanie Martinelli, CEO of the Institute for Transfer Effectiveness, quoted in the State of Facilitation 2026 report

When to send it: the closer to the session the better. Ideally within 24 hours, while the experience is still fresh. If you can build five minutes into the session closing to fill it out together (more on this in the survey fatigue section below), that might be a good call. Waiting longer means lower response rates and hazier memories. For longer programs spanning multiple days or sessions, a short check-in survey between modules can also be useful to catch issues mid-stream rather than only at the end.

Why training surveys matter (and why we’re still not doing them well)

According to the State of Facilitation 2026 report, fewer than 1 in 3 facilitators have agreed on measurable performance indicators with their clients before a session takes place. And 43.5% of respondents identified lack of follow-up as the main barrier to impact. Most facilitators, the report finds, are evaluating their work—but primarily in terms of their own performance, through end-of-session feedback they mostly keep to themselves.

Pre- and post-training surveys are one of the most direct ways to improve this. A pre-survey helps you and your client agree on what success looks like. A post-survey creates a record of what shifted. Together, they give you evidence rather than mere anectodal impressions.

So why aren’t we doing them? A few reasons come up again and again in practice:

Time pressure. Survey design gets squeezed out by the rush to prepare content and logistics. It feels like an extra, not a core part of the work.

Unclear ownership. In corporate settings especially, it’s not always obvious who is responsible for evaluation: the facilitator, the L&D team, or the commissioning manager? When it belongs to everyone, it often ends up belonging to no one.

Fear of what the data might say. This one doesn’t get talked about much, but it’s real. A post-training survey that reveals participants didn’t find the content relevant, or don’t intend to apply it, is uncomfortable. It’s easier not to ask.

Tool overload. Many L&D teams are already juggling multiple systems. Adding another survey tool feels like more friction, not less.

The good news is that none of these are insurmountable. Survey design doesn’t have to be elaborate: three all-purpose questions (what went well? what would you change? what else do we need to know?) will tell you more than no questions at all.

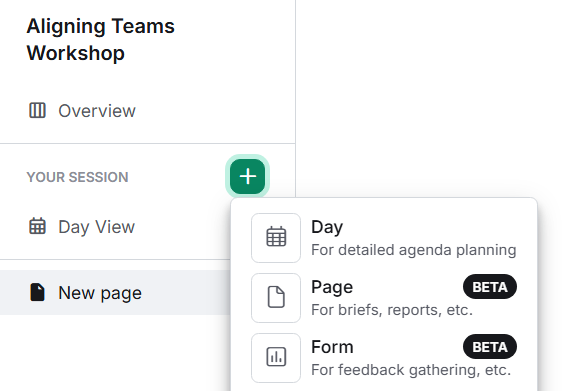

Ownership becomes clearer when surveys are built into the standard workflow from the start. We’ve been thinking about these barriers at SessionLab, which is why we’ve included Forms that are directly connected to your agenda and facilitation workflow, getting rid of some of that friction by design. All results are collected next to your agenda for quick reference.

And the data, even when it’s uncomfortable, is almost always more useful than the alternative: finishing a program and genuinely not knowing whether it worked. The willingness to seek honest feedback and use it is also, incidentally, one of the marks of a truly skilled trainer. If you’re thinking about what separates good trainers from great ones, this piece on the qualities of a good trainer is worth a read.

What I’ve learned from running pre-training surveys

I run pre-training surveys before almost every program I design and facilitate. Without exception, they teach me something I wouldn’t have known otherwise.

Before a recent facilitation training with a team in a small company working in digital education, I sent a short pre-survey asking, among other things, what previous training experiences had been like for participants.

I built it directly in SessionLab Forms, attached to the same session I was designing, which meant I could read through responses with the draft agenda open alongside them. And those responses were illuminating. Various people used the open text field to describe years of slide-heavy, passive sessions that had left them feeling talked at rather than engaged. It was honest, a little raw, and it told me exactly what I needed to know: this group had experience fatigue, and they needed to feel that something different was genuinely on offer.

It also validated my preference for participatory, active workshop formats, both because that is what I personally like to do and believe in, and as the right design response to this specific group’s history. Having that data then helped me defend my choices when the CEO began to get cold feet about doing something that deviated from their past practice.

That’s the kind of intelligence a pre-survey gives you. It’s a way to start getting a feel for the group before ever walking in the room.

Training survey question bank

In the next section you will find lists of questions you can take and use for your next pre-training survey.

Feel free to mix and match, and adapt these to your needs. If you’re working in SessionLab, this is a good moment to open a Form alongside your agenda and start building as you read.

By all means, don’t use them all: I recommend short, focused surveys, so as not to overwhelm participants (nor yourself) with too much to do and too much data to deal with. Aim for something around 5, maximum 10 questions. Group them around what you most need to know.

It’s good practice to combine a few open questions with some sliding scale or checkboxes, so that people who are in a rush can quickly provide a few data points, even if they don’t have the will to write longer, more thoughtful responses.

Personally, I feel that there is no way I will be able to think about everything a potential participant might possibly want to communicate to me before a workshop, and ask exactly the right questions. This is why I make it a practice to always include, at the end of the survey, an all-purpose question that’s something like:

- Is there anything else that you would like to share?

In my experience, most participants will leave this section blank. But the ones who do use it have great reasons to: often it’s a note on some logistical need I hadn’t yet considered (here’s a real-life example: “You should know that it’s very hard to park near the venue on Saturdays because of the local market, I suggest you let people know in advance”). Other times it’s just a cheerful “thanks, looking forward to the workshop,” which may not be the most useful comment in the world, but will give me a smile for sure!

Pre-training surveys question bank

Pre-training surveys can do several things at once, and it helps to know which job each question is doing: gathering logistics and context, shaping the design, or preparing participants for what’s ahead. Here are questions for all three.

Questions to support logistics

These help you anticipate practical needs and avoid surprises on the day.

- What is your current role?

- How long have you been in your current role?

- Are there any scheduling constraints or commitments we should know about?

- What is your dietary preference (e.g. vegetarian, vegan)?

- Do you have food allergies we should be aware of?

- Is there something we could do to support you with any accessibility needs during this session?

- What language do you feel most comfortable working in?

For all workshops, especially online or hybrid ones, this is a great spot to weave in some extra information in between questions. You might, for example, want to add a reminder to find a quiet place to work from, or that there is an invitation to keep cameras on, or simply that the workshop will be interactive.

Questions to help you design the session

These give you design intelligence: what the group already knows, what they need, and what might get in the way.

- How familiar are you with [topic]? (Scale: not at all familiar – very familiar)

- What do you already know or do well when it comes to [topic]?

- What would you most like to get out of this training? (Add a checklist if you have a list of possible outcomes)

- What challenges or situations related to [topic] do you face most often in your work?

- What has worked well in training or workshops you’ve attended in the past?

- What has made previous training experiences less useful or engaging for you?

- Is there anything about this topic that you’re skeptical about or that you’d like us to address directly?

- What would make this training feel like a success for you personally?

Photo credit Fabio Riva from the Facilita2026 gathering in Italy.

Questions to prepare and engage participants

Sometimes questions are not designed to give the trainer information as much as to help the participants prepare. More reflective questions help respondents arrive at the workshop feeling ready and prepared, and give them a sense of what to expect. These are subtle ways to start getting participants on your side, as partners in the learning experience rather than passive auditors.

- What’s one question you’re hoping this training will answer?

- Is there a specific situation or challenge you’d like to be able to handle better after this training?

- What would make this workshop a good use of your time?

- On a scale of 1–5, how confident do you currently feel about [skill or topic]?

- What would need to happen for you to apply what you learn here to your actual work?

Post-training survey question bank

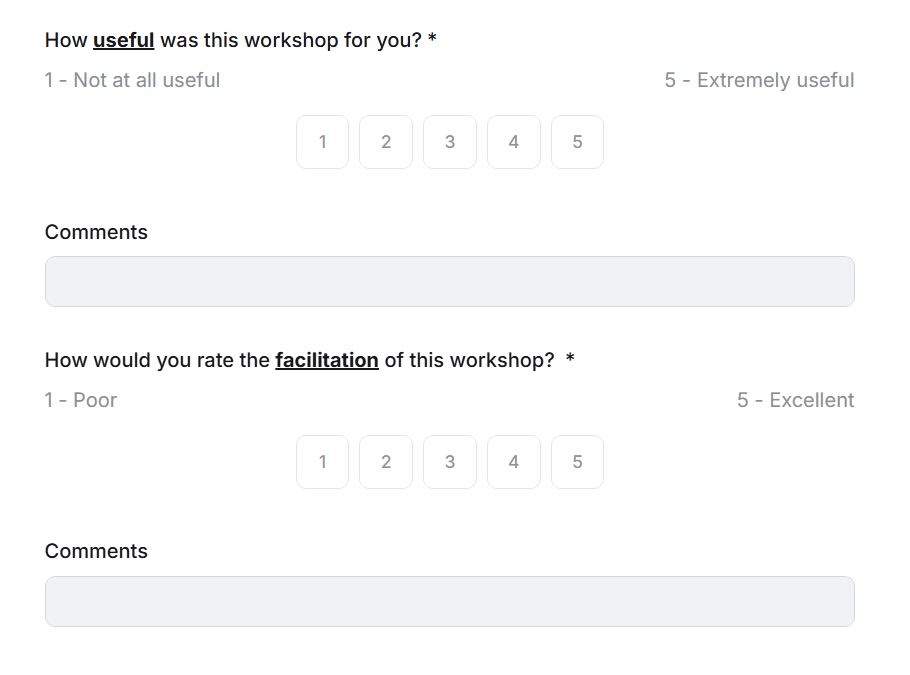

Post-training surveys do two different jobs, and it helps to keep them distinct: evaluating how the session went (trainer performance, design quality) and beginning to assess impact (what changed, what will participants do differently). Here are questions for both.

Questions to evaluate trainer and session performance

- How would you rate the overall quality of this training? (Scale: 1–5)

- Was the content relevant to your work and daily challenges?

- Was the session well-paced? (Too slow / About right / Too fast)

- Was there enough time for questions, discussion, and practice?

- What worked particularly well in this training?

- What would you change or improve?

- How was the training in three words?

Personally, I particularly like to use the question about defining the training in three words. It really helps me capture the mood at the end of a workshop, and it often doubles as a pretty neat visual to include in a final report.

Questions to assess confidence shift and learning

- How confident do you feel about [skill or topic] now, compared to before the training? (Scale: much less confident – much more confident)

- What is the most useful thing you learned or were reminded of today?

- Was there anything that surprised you?

- Is there anything you feel you still need to learn or practice on this topic?

Questions to explore application intent

These are some of the most valuable questions in a post-training survey, and the most often skipped. They start to bridge the gap between “I enjoyed this” and “this changed how I work.”

- What is one thing you intend to do differently as a result of this training?

- In what situation do you plan to apply what you’ve learned first?

- How confident are you that you’ll be able to apply this learning in your day-to-day work? (Scale: 1–5)

- What might get in the way of applying what you’ve learned?

- What support would help you put this into practice?

Questions about managerial and organizational support

Particularly relevant for in-house L&D programs, where the environment participants return to shapes whether learning sticks.

- Does your manager know you attended this training?

- Are there organizational factors that might make it difficult to apply this learning?

- Would it be helpful to have a follow-up conversation with your manager or team about this training?

Aiming for impact: 30-day follow-up questions

Thirty days after a training session is one of the most revealing moments in the learning cycle. Its far enough out that initial enthusiasm has worn off, close enough that people remember what they intended to do.

A short follow-up survey (3–5 questions maximum) sent 30-days after a session can tell you more about real impact than any post-session evaluation.

When to send it: thirty days is a useful rule of thumb, but the real principle is: long enough after the session that initial enthusiasm has settled and real behavior change (or lack of it) has had time to show up. Some practitioners run follow-ups at 30, 60, and 90 days for high-stakes programs. For most programs, one well-timed follow-up is a significant step forward from nothing at all. Set a calendar reminder the moment the session ends, or you’ll forget.

In SessionLab, you can attach this follow-up Form to the same session as the original training, keeping all your data in one place and making it easy to compare responses over time.

- Have you had the opportunity to apply what you learned in this training?

- If yes: what did you try, and how did it go?

- If no: what got in the way?

- How has your confidence with [topic] changed since the training?

- Is there anything you’d like further support or practice with?

- What, if anything, has changed in how you work as a result of this training?

Thirty-, sixty-, and ninety-day data provide the clearest picture of where learning is translating into change.

Chris Taylor, founder and CEO of Actionable.co, quoted in the State of Facilitation 2026 report

Going deeper: when surveys aren’t enough

Surveys are great for gathering structured, scalable input. But they have limits: they capture what people are willing and able to articulate in a form, which isn’t always the full picture.

When you have the possibility, it’s worth supplementing surveys with brief 1:1 conversations. After a recent vibecoding workshop here at SessionLab, I followed up with a handful of participants individually, offering people the choice of answering in writing or having a short conversation with me. Some of the most interesting insights came from those conversations: things people wouldn’t have typed into a form but were happy to talk about.

For capturing and organizing that kind of qualitative input, I use Pages in SessionLab, which lets me keep interview notes, survey data, and the workshop agenda all in the same place. It’s a small thing, but having everything connected to the actual session design rather than scattered across different tools makes the follow-up process much easier to manage.

If you’d like a fuller picture of how all of this fits together—brief, agenda, Forms, and follow-up—the post on building a complete session design workflow in SessionLab is a good read.

For a deeper dive into impact assessment frameworks specifically designed for facilitation and training, the Institute of Transfer Effectiveness’ 12 levers of Transfer Effectiveness is an excellent resource.

Tips to avoid feedback survey fatigue

A survey nobody fills in is worse than no survey at all: you get false confidence that you tried, without any of the data. Survey fatigue is real, and a few simple choices in how you design and frame your surveys can make a significant difference in response rates and quality.

Start with the basics: keep it short and make it feel worthwhile. Five to ten questions is usually enough. And while you’re at it, use the survey as an opportunity to share something useful in return. Include a brief note about how the session will run, what to expect, or what to prepare for. It turns a form into a two-way exchange rather than a one-sided data grab.

Similarly, tell people what the data is for. A single sentence (“Your answers will help me tailor the session to your needs and experience“) goes a long way. It also signals that someone will actually read their responses, which is not always a given.

On the subject of making surveys feel human: I sometimes start a pre-training survey with a completely throwaway question like “What did you have for breakfast?” It sounds silly, but it snaps people out of autopilot mode and gives them a clear sense that this isn’t going to be a dry, box-ticking exercise. Participants relax, and the responses that follow tend to be more thoughtful.

Think about tone and design too. A survey for senior executives might need to feel clean, fast, and frictionless. A survey for a creative team doing a team development day might benefit from something more playful and visually engaging. SessionLab Forms leans toward the clean and simple end of the spectrum, which works well for most organizations, but it’s worth thinking about what will feel right for the specific group you’re working with.

Finally, one of the most practical things you can do to improve your post-session response rate: build five minutes into the closing of your session to fill out the survey together. Instead of sending a link and hoping people get to it later, you do it in the room while the experience is still fresh. Response rates go from unreliable to near-100%, and it might offer some food for thought for post-workshop chit-chat.

Image credit Fabio Riva at the Facilita2026 gathering in Italy

How to avoid biased questions

Bias in survey questions usually creeps in through assumptions: assuming the participant enjoyed the session, that they have a manager, that they work in a certain kind of environment, or that they experienced something the way you intended them to.

When a question contains a built-in assumption, people tend to answer the assumption rather than their actual experience. The result is data that reflects what you hoped happened, not what did. A few practical principles to keep this in check:

- Avoid leading questions. “How much did you enjoy this training?” assumes enjoyment. “How would you describe your overall experience of this training?” leaves room for honesty.

- Don’t double-barrel. “Was the content relevant and well-paced?” is actually two questions. Split them up so you know which one people are answering.

- Watch your scales. Make sure rating scales are balanced (as many positive options as negative ones) and that you’re consistent throughout the survey.

- Offer an opt-out on sensitive questions. If you’re asking about barriers or challenges, include a “prefer not to say” option. People are more likely to answer honestly when they don’t feel cornered.

- Pilot your survey. Ask at least one person to test it before you send it out. They’ll catch the questions that don’t quite make sense.

Anonymous or not anonymous? That is the question

One of the first decisions to make when designing a survey is whether responses will be anonymous. There’s no universally right answer, but it’s worth thinking through deliberately rather than defaulting to whichever feels easier.

The case for anonymity is straightforward: people are more honest when they don’t feel observed. Especially in a corporate setting, where participants may worry about how their feedback reflects on them or their team, anonymity can be the difference between a polished non-answer and a genuinely useful one.

If you’re asking about barriers to application, manager support, or past negative training experiences, anonymity tends to produce richer, more candid responses.

The case against is equally practical. Without names attached, you lose the ability to follow up. If someone flags a significant barrier or expresses serious doubt about their ability to apply the learning, an anonymous response leaves you with the signal but no way to act on it.

For pre-training surveys in particular, knowing who said what can significantly shape how you design for specific individuals or subgroups. And in smaller teams, anonymity can be something of an illusion anyway, as people often know, or suspect, who said what.

A middle path worth considering: make the survey anonymous by default, but include an optional question at the end asking whether the participant is happy to be contacted to discuss their responses further. This way, those who want to stay anonymous can, and those who are open to a conversation can flag it. You get the honesty of anonymity and the option of follow-up where it matters most.

How to make your questions more inclusive

Inclusion in survey design is often overlooked, but it matters. This is especially true in corporate programs where participants may come from very different backgrounds, roles, and levels of familiarity with the subject. A survey that feels exclusive or tone-deaf undermines trust before the training has even begun.

Start with language. Avoid jargon, acronyms, or terms that assume a specific professional background; if you need a technical term, define it briefly. For global programs, watch for idiomatic expressions or cultural references that feel universal to one group but may be alienating or confusing to another.

Think about who might struggle with the format itself. Not everyone finds it easy to write in a second language, or to articulate experience in text form: make open-text questions genuinely optional and give people alternatives where you can. And watch your assumptions about work context: questions like “how will your manager respond?” assume a traditional hierarchy that may not apply to every organization. Consider whether participants might work in flat organizations, matrix structures, or with multiple stakeholders rather than one direct line manager.

Tips on demographic questions and self-identification

If your survey includes demographic questions—and there are good reasons it might, particularly if you want to understand whether the training is landing differently across different groups—how you ask them matters as much as whether you ask them at all.

- Explain why you’re asking. People are much more willing to share demographic information when they understand the purpose. A brief note like “We ask these questions to help us ensure our training is relevant and equitable for everyone” makes a real difference.

- Make demographic questions optional. No one should feel required to disclose information about their identity in order to complete a survey. Include a “prefer not to say” option on every demographic question, without exception.

- Use inclusive answer options for gender. Offer more than a binary choice. At minimum, include options such as “man,” “woman,” “non-binary,” “prefer to self-describe,” and “prefer not to say.” If your organization has an established set of options, use those for consistency.

- Avoid conflating categories. Race, ethnicity, nationality, and language are distinct things. Be precise about what you’re actually asking, and why. If you’re not sure you need the data, consider whether the question is necessary at all.

- Think carefully about disability and neurodiversity. If you’re asking about accessibility needs (which is a good idea in a pre-training survey), frame the question around what support would help the person participate fully, rather than asking them to self-diagnose or label themselves.

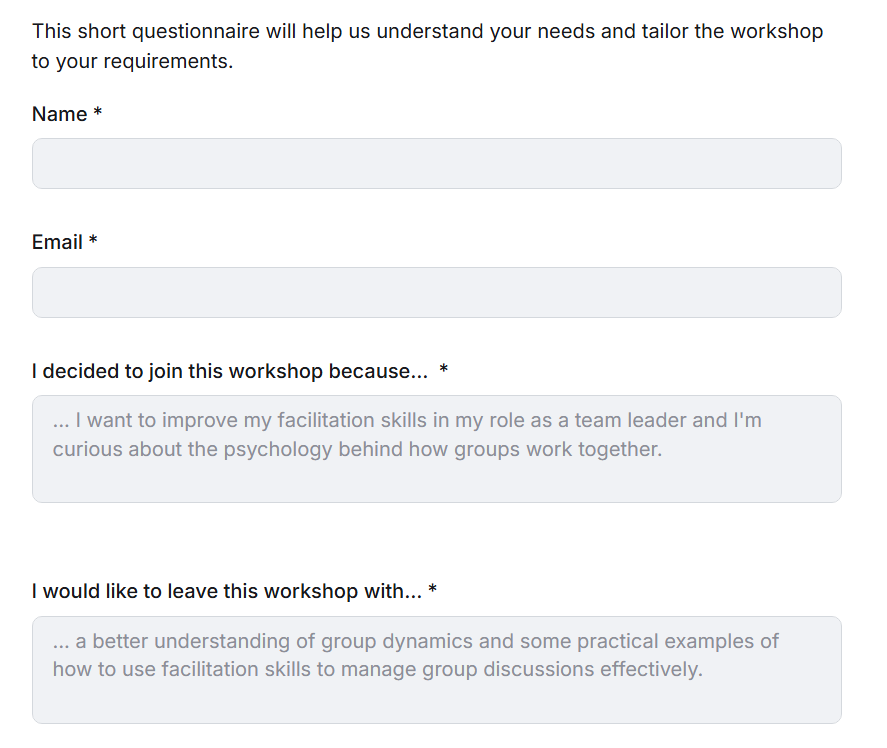

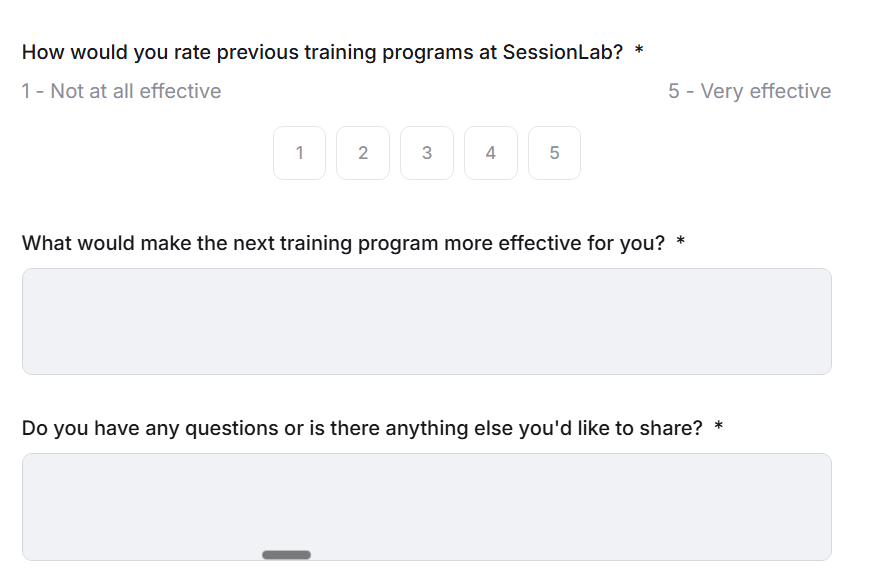

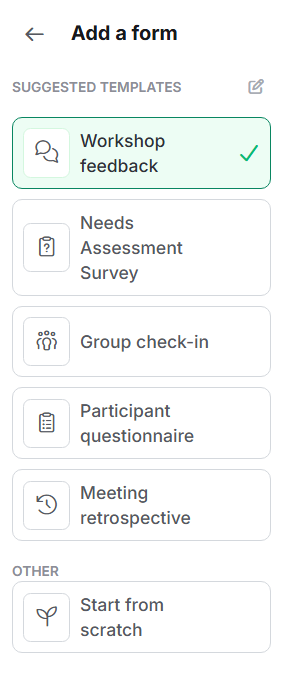

Getting started faster: SessionLab’s Forms templates

One of the practical barriers to running good surveys is the blank page problem. You know you should send a pre-training survey, but designing one from scratch (while also finalizing your agenda, briefing co-facilitators, and managing logistics) is one task too many. This is where SessionLab’s Forms templates earn their keep.

When you add a Form to a session in SessionLab, you get instant access to a set of ready-made templates designed for the most common survey moments in a facilitation workflow. A few examples of what’s available:

- Needs Assessment Survey. A ready-made version of a pre-training assessment of what participants need, what their past experiences have been, and what they hope to learn.

- Participant Questionnaire. A quick way to collect logistical needs and gather key information about participants, so you can tailor your design to their requirements, and pass on practical requests to the logistics team as appropriate.

- Meeting Retrospective. Designed for teams reflecting on how a session or project went. Useful if you’re running training as part of a longer program and want structured check-ins between sessions.

- Workshop Feedback. A post-session form covering overall experience, what worked, what didn’t, and key takeaways. A solid starting point for any post-training survey, ready to send the moment your session ends.

You can see an example of how this works in practice with this facilitator guide template, which includes a training agenda and three surveys intended to gather feedback at various points of the training lifecycle.

You can use any of these as-is, or adapt them, adding, removing, or reordering questions to fit your specific context. Once you’ve customized a template to your needs, you can save it as your own reusable template and pull it into any future session with a click.

For L&D teams running the same programs repeatedly this is particularly useful. Build your pre-training and post-training survey templates once, refine them after the first run, and reuse them consistently across the organization. Over time, you also get comparable data across cohorts, which makes it much easier to spot patterns and demonstrate impact.

If you’d rather not start from a template at all, the AI-powered form builder can generate a draft survey for you based on your session context. Describe what you’re training on and who the participants are, and it will suggest relevant questions, which you can then edit, rearrange, or add to as needed.

For a full walkthrough of how Forms works, including sharing options, anonymity settings, and how to review responses, the SessionLab help center has everything you need.

How SessionLab helps you run better training surveys

There are plenty of survey tools out there. Google Forms, Typeform, MentiMeter and SurveyMonkey are among the most commonly used by facilitators. Here at SessionLab we’ve also been experimenting with AI-powered survey tools like Harmonica, which takes a conversational approach to collecting qualitative data that can feel much more natural than a traditional form.

What SessionLab’s Forms offers that’s harder to replicate elsewhere is integration. Your pre-training survey questions and responses, your agenda, your facilitator notes, your post-session feedback form, and your follow-up data all live in the same place, connected to the same session. You’re not switching between tools or trying to remember which folder you saved the results in.

If you want to go further and capture 1:1 interview notes or qualitative follow-up data, Pages allows you do that in the same workspace, so the full picture of a program’s impact stays organized and accessible, rather than spread across your inbox and various documents.

A final thought

Running good training surveys is, at its core, an act of care: for your participants, for your client or organization, and for the craft of training design. It says that you are invested in tailoring your work to the actual people in the room, and that you care about what actually happened, not just what you hoped would happen.

The question bank in this article is a starting point. Take what’s useful, leave what isn’t, and adapt it to the reality of your programs and your participants. The goal is a consistent habit of asking, listening, and using what you hear to make the next training a little better than the last.

That’s how impact gets built: one good practice habit at a time.

Leave a Comment